production deployment updates take an age

the first attempt at deploying 26651216 timed out after 1 hour. it had only managed to complete as far as storage001 (it was working on storage002).

transferring our packages from saxtons to the storage nodes takes forever. why? on saxtons the SendQ for a connection to port 80 is huge, many hundreds of kB. cpu on saxtons and the storage node is essentially idle.

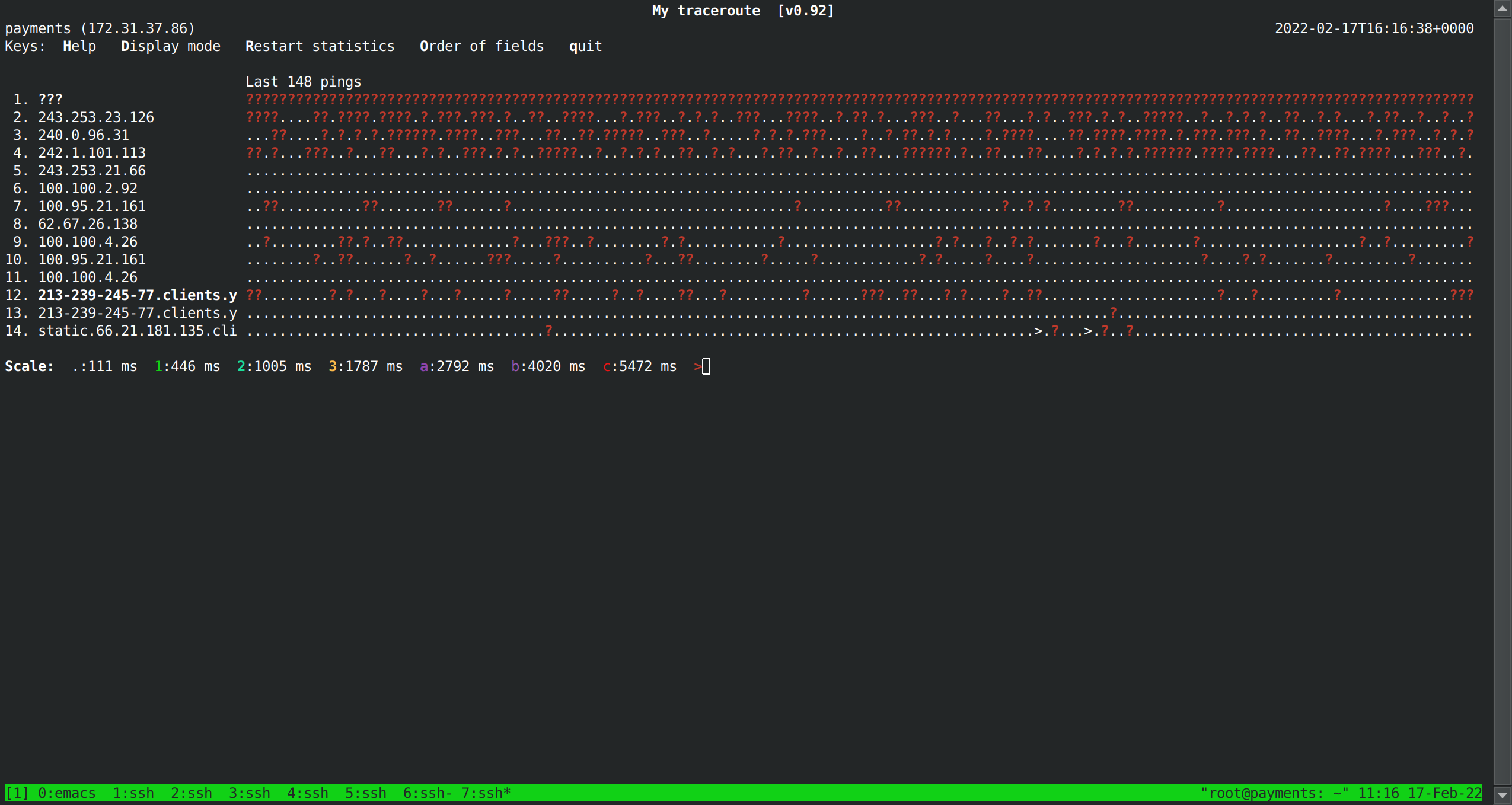

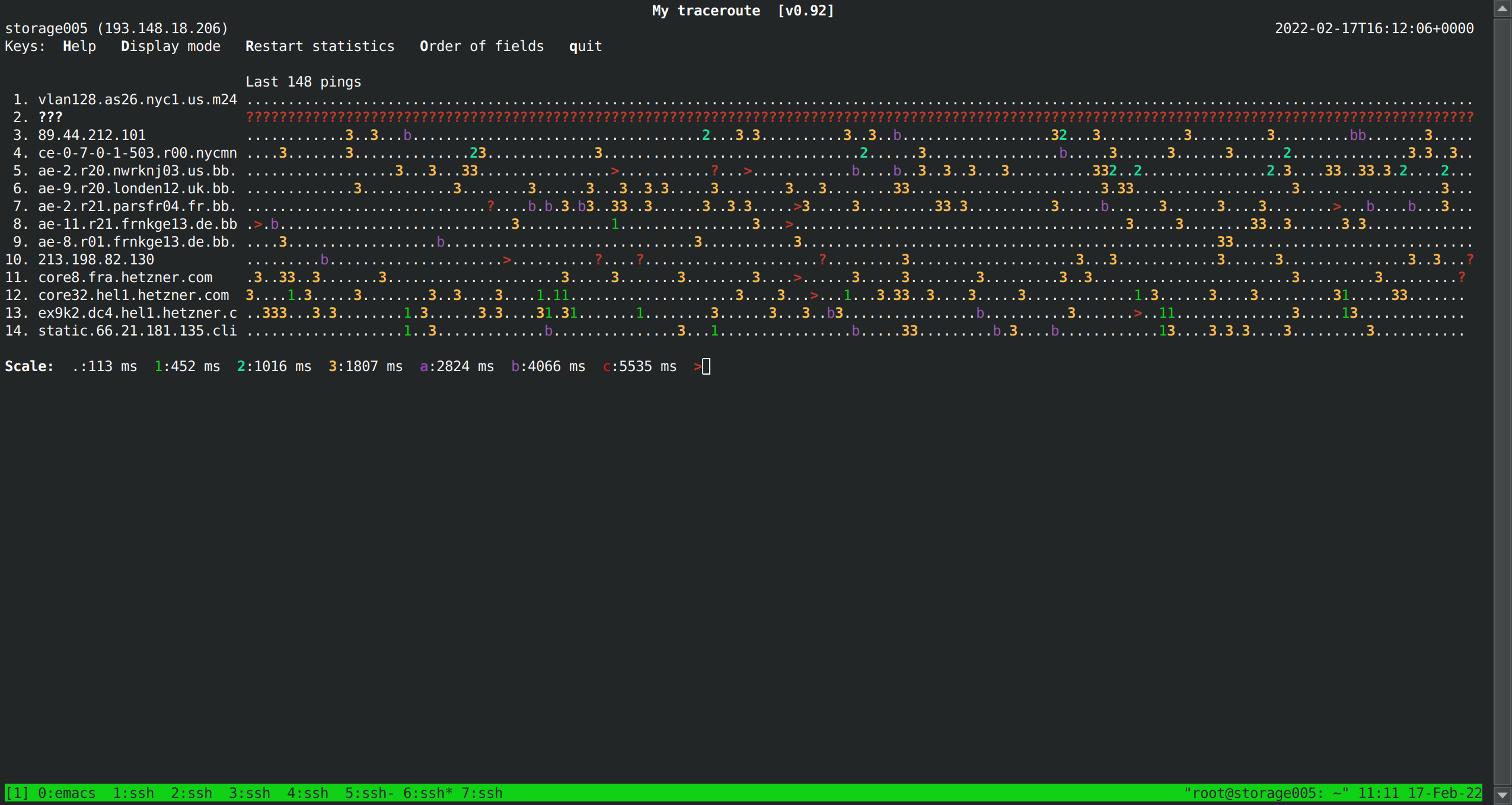

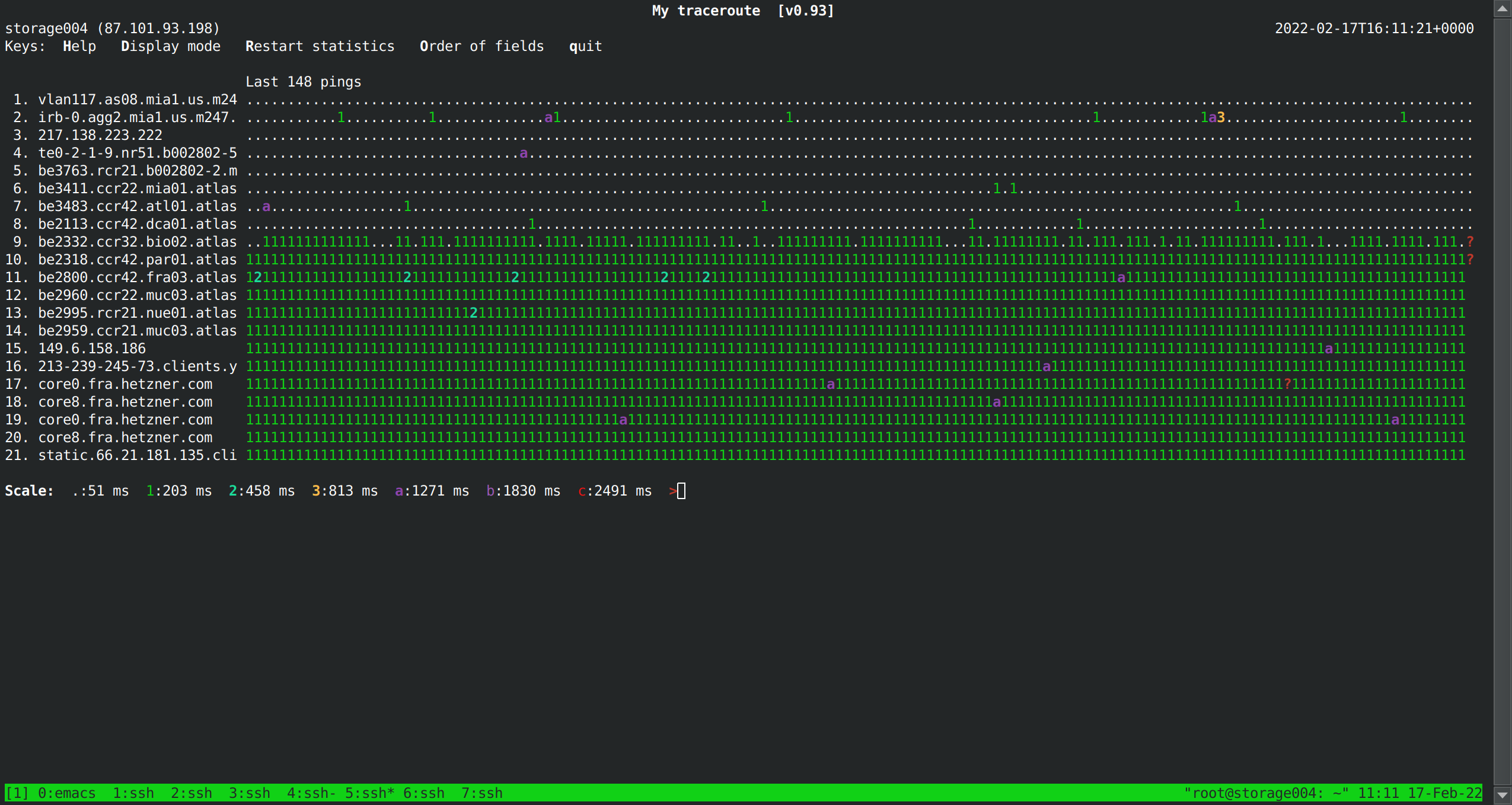

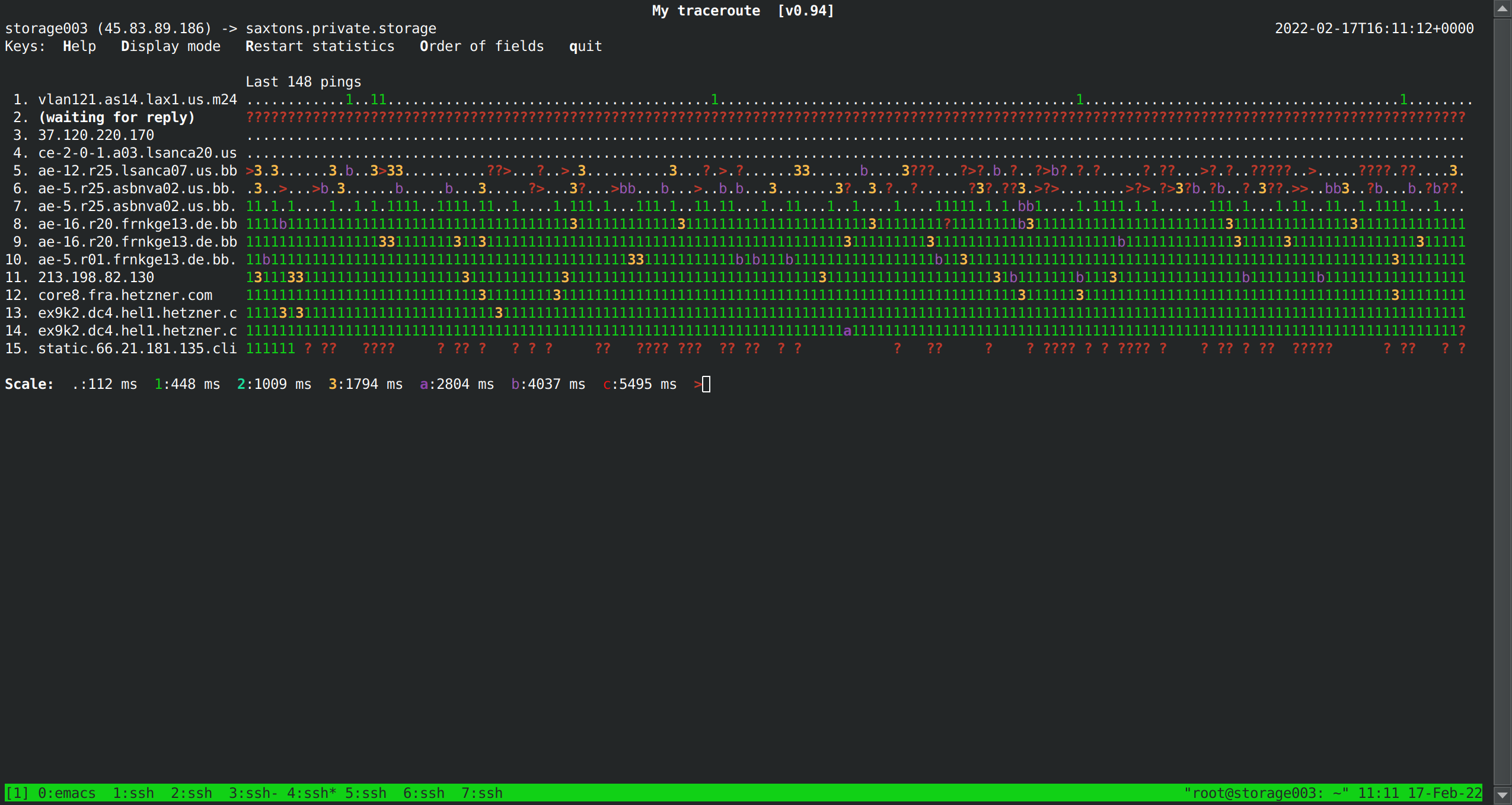

network traffic conditions on storage001-storage005 seem catastrophically awful

some messages I sent on slack

Measured speed of transfer of 100MB file from saxtons to storage001-storage005 using scp:

storage001 - 17 MB/sec storage002 - 9 MB/sec storage003 - <200KB/sec (didn't wait for it to finish) storage004 - 11 MB/sec storage005 - <200KB/sec (didn't wait for it to finish)very sloppy methodology but ... <200KB/sec???

slightly improved methodology ...

speedtest-cli[multiple runs]...storage001 - [656 Mbit/s, 120 Mbit/s, 768 Mbit/s] storage002 - [91 Mbit/s, 93 Mbit/s, 46 Mbit/s] (!) storage003 - [337 Mbit/s, 377 Mbit/s, 717 Mbit/s] (!) storage004 - [743 Mbit/s, 745 Mbit/s, 803 Mbit/s] storage005 - [210 Mbit/s, 540 Mbit/s, 545 Mbit/s] saxtons - [446 Mbit/s, 436 Mbit/s, 570 Mbit/s]*(!) These don't look that bad but it did take ~10 minutes to download the speedtest tool... doesn't really mesh with the speedtest results themselves. :/

(*) Hetzner operates a speedtest server so I override the default server selection to pick a non-Hetzner server so we're not measuring Hetzner-Hetzner transfer.

locations -

storage001 - new york

storage002 - LA

storage003 - LA

storage004 - miami

storage005 - miamiOther random observations ... a hop on the route from storage003 to saxtons (http://ae-5.r25.asbnva02.us.bb.gin.ntt.net (ae-5.r25.asbnva02.us.bb.gin.ntt.net)) has 30+% packet loss. end-to-end packet loss is 0.9% (9x backbone-to-backbone loss allowed by m247 SLA). ping time is around 180ms right now. I briefly saw it around 300ms to even the first hop on the route.

storage001 and storage005 have 15% packet loss on all hops to saxtons after the 2nd. storage004 has 1% end-to-end loss. only storage002 looks kind of reasonable at 0.1% loss.

all those packet-related measurements taken with

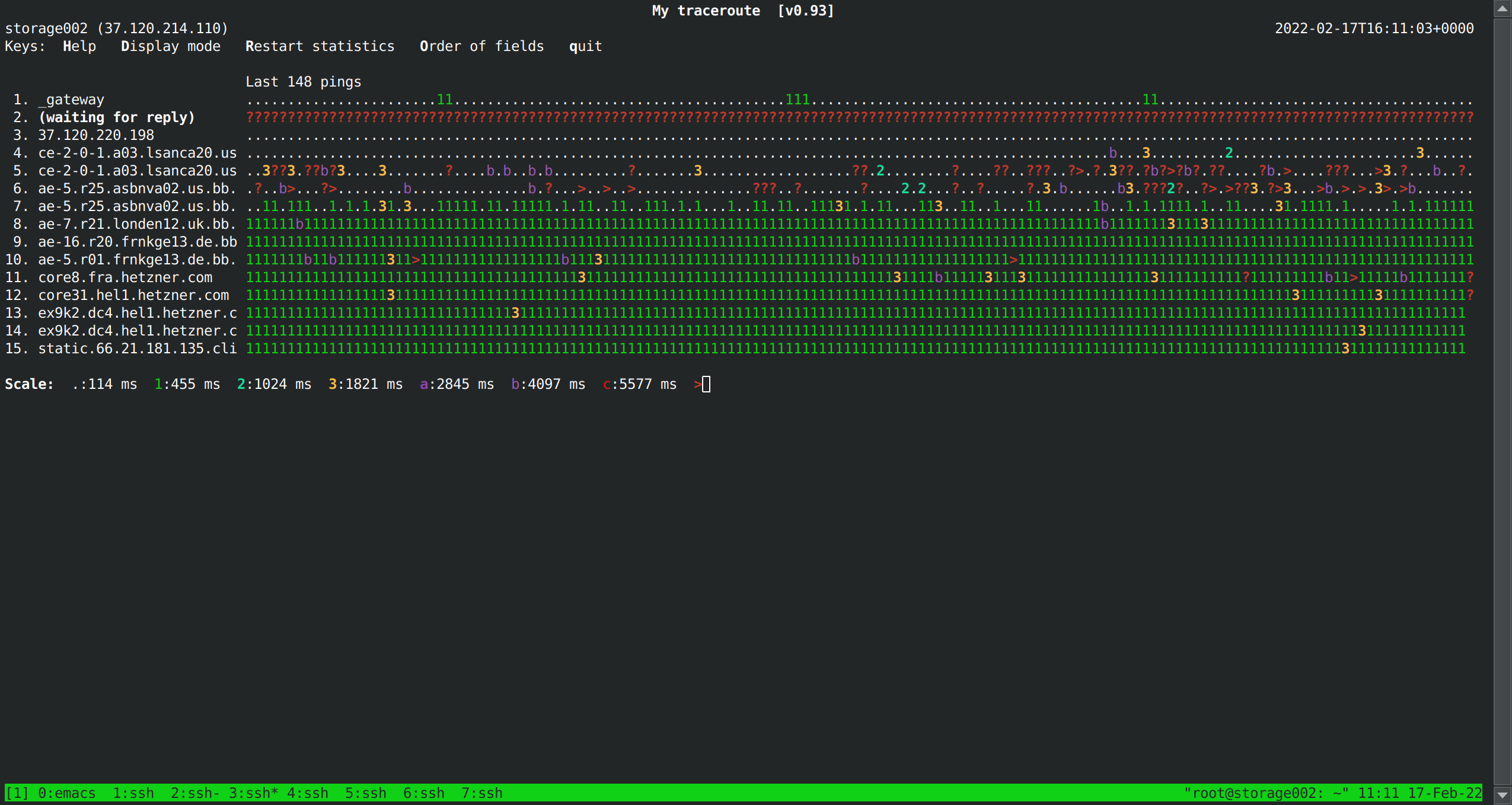

mtrwithmtr -TI can see multipath routing going on which (at least initially) seems to have lower loss but massively higher rtts (1-7s for some hops)